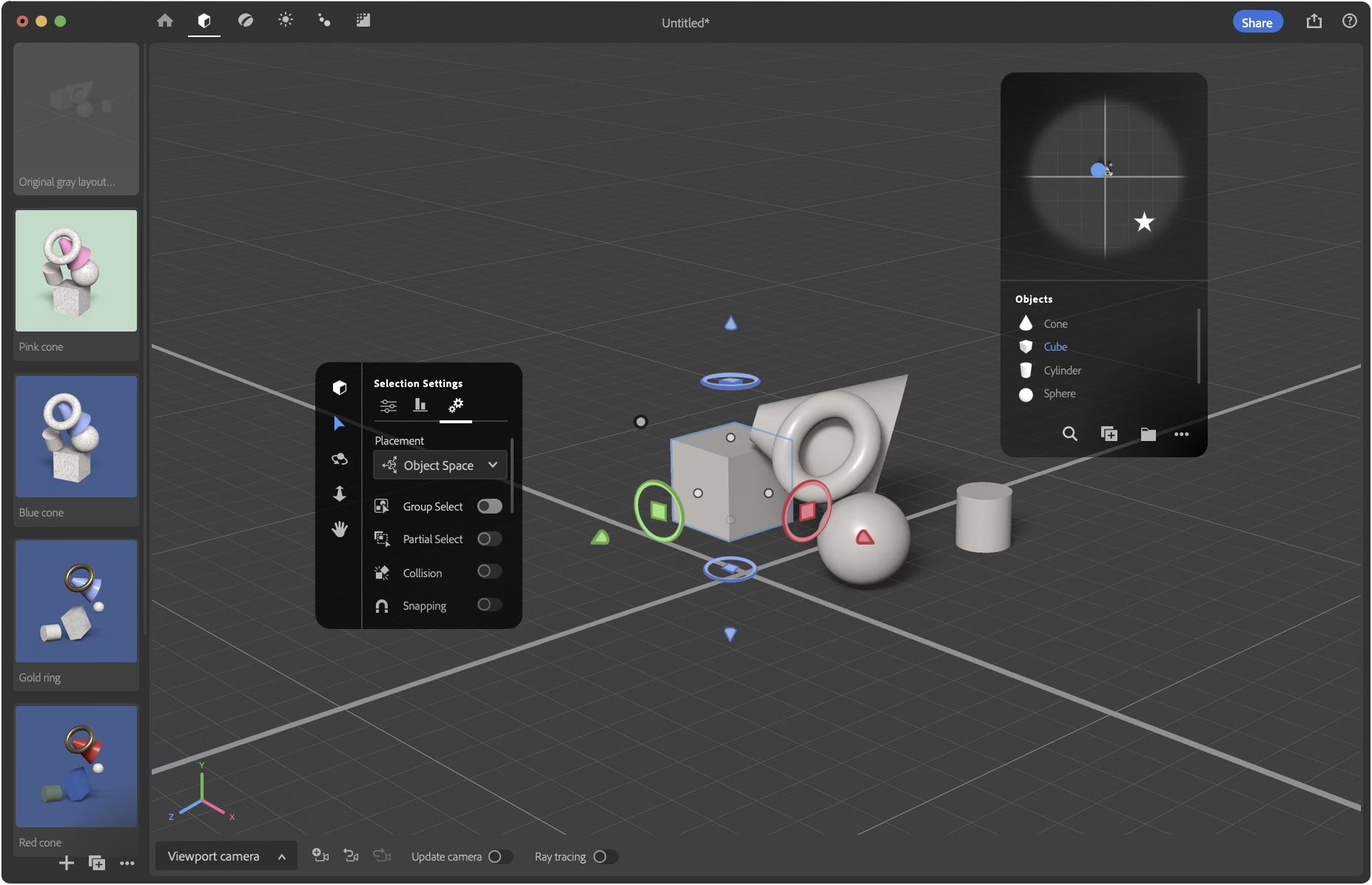

2020 Photoshop interface featuring Neural Filters. // Source: Stan Horaczek, Popular Science

This was from the early days of genAI and Adobe’s first public feature. Neural filter > Smart Portrait > Expressions allowed Photoshop users to manipulate emotional expressions of faces using semantic sliders. Before I joined the team it was referred to as Emotions and there was uncertainty about how to implement the underlying technology. I knew from my own dissertation research that it would be more accurate to communicate it as expressions and helped to adjust its functionality to make it easier for users. Had they continued to call it Emotions, Adobe risked public criticism others later faced implementing similar tech (e.g., Microsoft to retire controversial facial recognition tool that claims to identify emotion, The Verge).

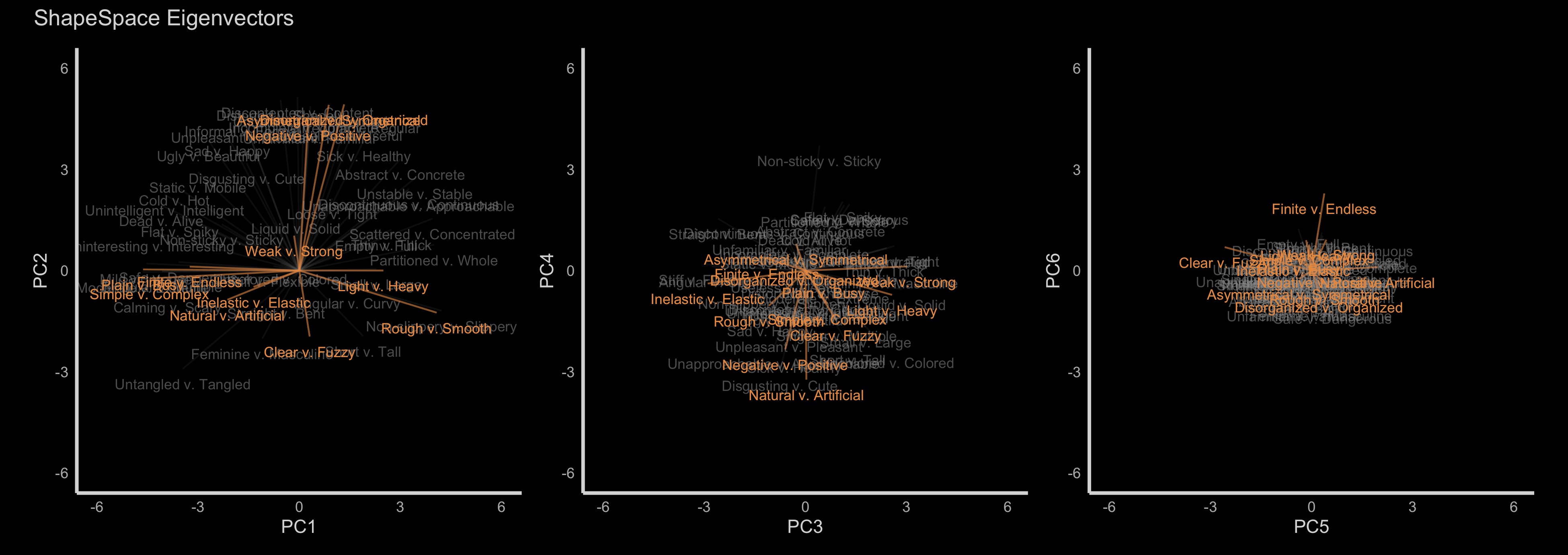

My dissertation work was related to my work at Adobe. Above are semantic differentials mapped into a six dimentional space.

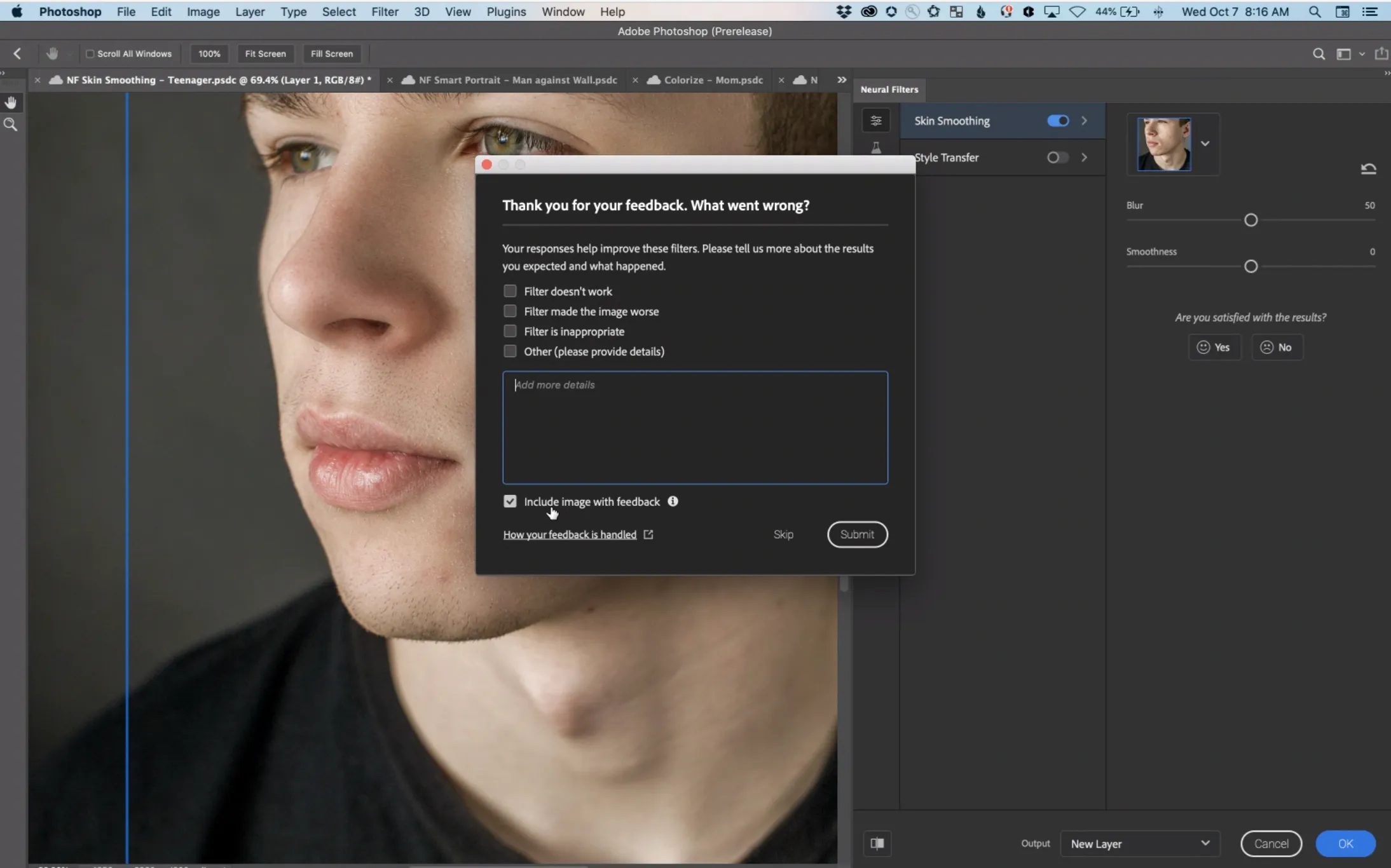

Neural Filter’s AI feedback mechanism is another example of my influence. Many at the time did not understand that genAI could potentially generate upsetting results. I knew this because of ongoing work by colleagues in graduate school. Across many meetings, I gradually convinced enough stakeholders to launch Adobe’s first AI feedback mechanism. It setup an important precedent, but it could have been something greater. The ideal UX would create a clean pipeline for retraining and improving underlying models. As a data scientist, I knew this UX couldn’t to do that. The experience taught me the difficulty old teams have transforming the way they think about product development.

Adobe’s first in-app feedback mechanism. // Source James Vincent, The Verge